Key Takeaways

- Precision in Digital Strategy: Master A/B testing to refine your digital elements strategically. Examples showcase how tweaks, guided by data, can lead to significant improvements in conversion rates.

- Data-Driven Decision-Making: Embrace statistical significance and robust analysis. A/B testing isn’t just about experimentation; it’s a commitment to informed decisions that shape a dynamic and optimized digital landscape.

- Continuous Improvement Journey: A/B testing isn’t a one-time effort but a cycle of refinement. Learn from each test, leverage real-world insights, and adopt an iterative mindset for sustained success in the ever-evolving digital realm.

Welcome to the dynamic world of digital marketing, where strategic decision-making reigns supreme.

If you’ve ever wondered how top-performing websites and campaigns seem to effortlessly draw in conversions, the answer might just lie in the art and science of A/B testing.

In this comprehensive guide, we embark on a journey through the intricacies of A/B testing, unraveling its significance, dissecting its methodologies, and showcasing real-world examples that illuminate its transformative power.

The Genesis of A/B Testing: A Digital Alchemy

In the vast expanse of the online landscape, where user behavior evolves at the speed of a click, staying ahead demands more than just intuition—it requires precision, data-driven insights, and a commitment to continuous improvement.

Enter A/B testing, a sophisticated method that serves as the linchpin for digital marketers, web developers, and anyone keen on optimizing their online presence.

At its core, A/B testing, also known as split testing, is a systematic approach to experimentation, allowing professionals to compare two or more versions of a webpage, email campaign, or other digital assets to determine which performs better.

It’s akin to a digital laboratory where hypotheses are tested, and data-driven decisions emerge as the currency of success.

The Pulse of A/B Testing: Why It Matters in Digital Dominance

A/B testing is more than a buzzword; it’s a cornerstone for achieving and sustaining success in the fast-paced realm of digital marketing.

As we delve deeper into this comprehensive guide, we’ll uncover the myriad ways in which A/B testing influences conversion rates, shapes user experiences, and ultimately propels businesses toward a coveted return on investment (ROI).

Imagine having the ability to fine-tune your website, email campaigns, or advertisements based on actual user behavior, preferences, and interactions.

A/B testing empowers you to do just that, turning your digital assets into dynamic entities that adapt and evolve in real-time.

It’s not merely a tool; it’s a strategic ally that allows you to iterate, optimize, and refine your online presence with surgical precision.

Embarking on the A/B Testing Odyssey: Your Guide to Success

As we embark on this A/B testing odyssey, our aim is clear: to demystify the intricacies of A/B testing, providing you with a comprehensive roadmap enriched with actionable insights.

From understanding the fundamental principles to navigating the practical nuances of implementation, we leave no stone unturned in equipping you with the knowledge and tools needed to master the art of A/B testing.

Throughout this guide, we’ll illustrate the A/B testing process step by step, helping you formulate hypotheses, create compelling variations, and decipher the results with confidence.

But we won’t stop there—we’ll dive into real-world examples that showcase the transformative impact of A/B testing across diverse industries.

From e-commerce triumphs to content optimization victories, these case studies serve as beacons illuminating the path to digital success.

Beyond Theory: Navigating Challenges, Implementing Best Practices

Yet, as with any powerful tool, challenges and complexities exist.

Fear not, for we’ll confront these head-on, offering insights into common pitfalls and providing strategies to overcome them.

We’ll explore best practices that extend beyond the basics, covering testing frequency, segmentation strategies, and iterative approaches that ensure your A/B testing endeavors are not just one-time wins but enduring successes.

Your All-Access Pass to A/B Testing Mastery

Consider this guide your all-access pass to A/B testing mastery.

Whether you’re a seasoned digital marketer looking to refine your strategies or a newcomer eager to unlock the potential of A/B testing, each section is crafted to empower you with knowledge that transcends theory and translates seamlessly into practical application.

Buckle up as we navigate the intricate landscape of A/B testing, unlocking its secrets, unraveling its nuances, and arming you with the insights needed to propel your digital endeavors to new heights.

The journey begins now, and the destination is nothing short of digital dominance. Welcome to the Comprehensive Guide to A/B Testing: Where Knowledge Meets Results.

Before we venture further into this article, we like to share who we are and what we do.

About 9cv9

9cv9 is a business tech startup based in Singapore and Asia, with a strong presence all over the world.

With over seven years of startup and business experience, and being highly involved in connecting with thousands of companies and startups, the 9cv9 team has listed some important learning points in this overview of the guide on what is A/B Testing.

If your company needs recruitment and headhunting services to hire top-quality employees, you can use 9cv9 headhunting and recruitment services to hire top talents and candidates.

Find out more here, or send an email to [email protected].

Or just post 1 free job posting here at 9cv9 Hiring Portal in under 10 minutes.

What is A/B Testing? A Comprehensive Guide With Examples

- What is A/B Testing?

- Why A/B Testing Matters

- Getting Started with A/B Testing

- A/B Testing Process

- Analyzing A/B Test Results

- Common Challenges in A/B Testing

- Best Practices for A/B Testing

1. What is A/B Testing?

In the realm of digital optimization, A/B testing stands tall as a beacon of data-driven decision-making.

At its essence, A/B testing involves comparing two versions (A and B) of a webpage, email campaign, or other digital elements to determine which one performs better in achieving a specific goal.

Let’s break down the core principles that make A/B testing a game-changer in the digital landscape.

Principle of Randomization: The Scientific Foundation

A/B testing operates on the principle of randomization, ensuring that users are assigned randomly to either the control group (A) or the variant group (B).

This random allocation minimizes biases, creating a solid foundation for statistical significance.

Defining Key Metrics: Setting the North Star

Before diving into A/B testing, it’s crucial to define the key metrics that align with your business goals.

Whether it’s click-through rates, conversion rates, or revenue per visitor, identifying these metrics provides a clear direction for your experimentation.

The Anatomy of A/B Testing: How It Works

Now that we’ve laid the groundwork, let’s delve into the mechanics of A/B testing, exploring each stage of the process and its significance.

Formulating Hypotheses: The Blueprint for Success

Every A/B test begins with a hypothesis—a clear, testable statement predicting the outcome of a change.

For instance, hypothesizing that a green call-to-action button will result in higher click-through rates than a red one sets the stage for experimentation.

Creating Variations: Crafting the Digital Alchemy

With hypotheses in place, it’s time to create variations for testing. Whether it’s tweaking headline copy, altering image placement, or adjusting the color scheme, variations should be distinct enough to measure the impact on user behavior.

Implementing and Running the Test: The Crucial Execution Phase

Implementation involves deploying the variations to the target audience, and running the test requires monitoring user interactions.

This phase demands precision and patience as the data begins to unveil insights into the performance of each variant.

The Impact of A/B Testing on Conversion Rates

Conversion rates serve as a key performance indicator for the success of digital initiatives.

A/B testing plays a pivotal role in optimizing these rates, ensuring that every click has the potential to translate into a valuable action.

Case Study: Optimizing Landing Page Conversions

Consider the case of Company X, which conducted an A/B test on its landing page. By simplifying the form and adjusting the call-to-action language, they observed a staggering 30% increase in form submissions. This demonstrates how A/B testing can directly impact conversion rates, leading to tangible results.

2.Statistics: A/B Testing’s Conversion Boost

The average A/B test improves conversion rate by 49%. This statistic underscores the substantial impact A/B testing can have on the crucial metric of conversion.

Enhancing User Experience Through A/B Testing

User experience (UX) is a cornerstone of digital success, influencing how visitors interact with a website or application.

A/B testing serves as a powerful tool for refining and elevating the user experience.

Real-world Example: Mobile App Navigation

Imagine a mobile app that underwent A/B testing to optimize its navigation menu. By testing different menu structures, the development team identified a variant that led to a 20% increase in user engagement. This highlights how A/B testing can directly enhance the user experience.

Data Insight: A/B Testing and User Satisfaction

According to Forrester, a great UX design could increase conversion rates by 400%. A seamless and intuitive user experience, shaped by A/B testing insights, contributes significantly to user satisfaction and loyalty.

A/B Testing Across Industries: Real-world Success Stories

A/B testing isn’t confined to a specific niche—it’s a versatile strategy applicable across industries. Let’s explore notable examples that showcase the transformative power of A/B testing.

E-commerce Triumph: Changing Button Placement

In the e-commerce sector, a major retailer conducted an A/B test by relocating the “Add to Cart” button on its product pages. The variant, with the button placed prominently above the product description, led to a 25% increase in conversions. This exemplifies how small changes, validated through A/B testing, can yield significant results in the highly competitive e-commerce landscape.

Content Optimization Victory: Headline Impact on Engagement

For content-driven websites, A/B testing isn’t limited to product pages. An online publication experimented with different headline formats and discovered that question-based headlines resulted in a 17% increase in article engagement. This showcases the broad applicability of A/B testing in content optimization.

Overcoming Challenges and Implementing Best Practices

While the benefits of A/B testing are profound, navigating challenges and adhering to best practices are crucial for sustained success.

Common Challenge: Sample Size Pitfalls

One common challenge in A/B testing is inadequate sample sizes, leading to unreliable results. To mitigate this, adhere to statistical principles and ensure your sample size is statistically significant before drawing conclusions.

Best Practice: Iterative Testing for Ongoing Optimization

A/B testing is not a one-and-done endeavor—it’s a continuous process of refinement. Embrace iterative testing, constantly learning from each experiment and applying insights to refine your digital assets over time.

As we conclude this comprehensive section to A/B testing, the overarching theme is clear: A/B testing is not just a tool; it’s your digital ally in the quest for optimization, user satisfaction, and conversion excellence.

From its scientific foundations to real-world examples and statistical validations, A/B testing empowers you to make informed decisions that resonate with your audience and drive digital success.

So, embrace the power of A/B testing, unlock its potential, and let data be the guiding force behind your digital evolution. The journey to optimization awaits, and A/B testing is your trusted companion on this exhilarating expedition.

2. Why A/B Testing Matters: Unveiling the Significance of A/B Testing

In the ever-evolving landscape of digital marketing, A/B testing emerges as a linchpin, offering marketers, web developers, and businesses a dynamic approach to refining their online strategies.

Let’s delve into why A/B testing matters, exploring its impact on conversion rates, user experience, and overall return on investment.

Precision in Decision-Making: The Data-Driven Advantage

A/B testing serves as a beacon of data-driven decision-making, injecting precision into the often subjective realm of marketing strategies. By relying on user behavior and interaction data, businesses can make informed choices that resonate with their audience.

- According to a survey, 77% of B2B marketers believe A/B testing their copy is important to their success.

Optimizing Conversion Rates: Turning Clicks into Conversions

Conversion rates are the heartbeat of any digital strategy, and A/B testing provides a strategic pathway to elevate these rates through iterative experimentation.

Personalization and Targeting: Tailoring Experiences for Impact

A/B testing extends beyond broad strokes; it enables personalization and targeted optimizations, ensuring that different audience segments receive experiences tailored to their preferences.

- A survey found that 74% of customers feel frustrated when website content is not personalized to their interests.

Realizing the Impact: A/B Testing in Action

Let’s explore concrete examples that showcase how A/B testing has translated into tangible success for businesses across various industries.

E-commerce Marvel: Dynamic Pricing Experiment

Imagine an e-commerce giant experimenting with dynamic pricing through A/B testing. By offering a discount pop-up to one group and a time-limited offer to another, the company identifies the optimal pricing strategy that maximizes revenue. This exemplifies how A/B testing can be a game-changer in the highly competitive e-commerce landscape.

SaaS Revolution: Onboarding Process Refinement

In the realm of Software as a Service (SaaS), A/B testing becomes instrumental in refining the onboarding process. By testing variations in the sign-up flow, a SaaS company discovers that simplifying the registration process leads to a 20% increase in trial-to-paid conversions. This demonstrates the pivotal role A/B testing plays in enhancing user journeys for subscription-based services.

A/B Testing’s Role in Maximizing ROI

Return on Investment (ROI) is the ultimate litmus test for the effectiveness of any marketing endeavor. A/B testing emerges as a catalyst for maximizing ROI, ensuring that every digital effort translates into measurable success.

Case Study: Email Marketing Campaign Optimization

Consider a scenario where a company decides to A/B test different subject lines for its email marketing campaign. By analyzing open rates and click-through rates, they identify the subject line variant that resonates most with their audience. This meticulous testing directly impacts the ROI of the email campaign, showcasing the immediate and tangible benefits of A/B testing in the realm of digital communication.

Industry Insight: A/B Testing and Marketing ROI

By using A/B testing, Dell increased their revenue by 10%. This statistic underlines the financial benefits of incorporating A/B testing into the broader marketing playbook.

Navigating the Competitive Landscape

In a world where competition for digital attention is fierce, A/B testing becomes a strategic differentiator, allowing businesses to stay agile and adaptive to changing user preferences.

Competitive Advantage: A/B Testing as a Market Driver

A company that consistently A/B tests its website elements gains a competitive advantage by ensuring a seamless user experience. This not only attracts new users but also retains existing ones, contributing to long-term market dominance.

Responsive Marketing: Adapting in Real-Time

Consider a scenario where a social media advertising campaign is A/B tested with different ad creatives. The variant that resonates most with the target audience is swiftly identified, allowing the marketing team to adapt and reallocate resources in real-time. This responsive approach positions the business ahead of competitors still relying on static strategies.

A/B Testing’s Impact on User Experience

User experience (UX) is the fulcrum upon which digital success pivots. A/B testing emerges as a key player in enhancing and perfecting the user journey.

E-commerce Exploration: Product Page Redesign

Imagine an online retailer seeking to optimize its product page. Through A/B testing, the company experiments with different layouts, image placements, and call-to-action buttons. The variant that results in a smoother and more intuitive user experience is swiftly implemented, contributing to increased engagement and conversions.

- A case study reveals that even small improvements in e-commerce user experience can lead to a significant boost in conversion rates, emphasizing the importance of ongoing A/B testing for UX refinement.

Customer Retention: A/B Testing for Loyalty

A subscription-based service decides to A/B test different variations of its user dashboard. By analyzing user interactions and feedback, the company identifies the variant that enhances user satisfaction and encourages longer subscription durations. A/B testing, in this context, becomes a strategic tool for customer retention and loyalty.

Overcoming Skepticism: A/B Testing’s Advocates

While the merits of A/B testing are clear, skepticism may linger among those hesitant to adopt this approach. Let’s address common concerns and showcase the advocacy for A/B testing.

Common Concern: Resource Intensiveness

Some argue that A/B testing is resource-intensive, requiring significant time and effort. However, proponents emphasize that the initial investment in A/B testing yields long-term benefits, as the data-driven insights lead to optimized strategies and efficient resource allocation.

Advocacy: A/B Testing as a Strategic Imperative

Leading marketing experts and organizations champion A/B testing as a strategic imperative. By incorporating A/B testing into their processes, businesses gain a competitive edge, ensuring that their digital strategies evolve in tandem with user preferences and industry trends.

As we conclude our exploration into why A/B testing matters, the resounding message is clear—A/B testing is not merely a tactic; it’s a transformative strategy that propels businesses toward digital excellence.

From precision in decision-making to maximizing ROI, A/B testing serves as the North Star guiding marketers and businesses through the dynamic landscape of the digital age.

Embrace A/B testing as your ally in the pursuit of optimization, and let data be the compass directing your digital evolution.

In a world where adaptability and responsiveness define success, A/B testing emerges as the beacon illuminating the path to sustained digital excellence.

The journey continues, and A/B testing stands as your steadfast companion on this exhilarating quest for digital mastery.

3. Navigating the A/B Testing Landscape: A Comprehensive Section for Getting Started

Embarking on the A/B testing journey requires a strategic approach, meticulous planning, and a commitment to data-driven decision-making.

In this section, we’ll guide you through the essential steps of getting started with A/B testing, from defining objectives to selecting elements for experimentation.

Define Your Objectives and Goals: Setting the Course for Success

Before diving into A/B testing, it’s imperative to define clear objectives and goals. These should align with broader business objectives and focus on key performance indicators (KPIs) relevant to your digital strategy.

- With 60% of companies already using it and another 34% planning to use it, A/B testing is the number one method used by marketers to optimize their marketing campaigns.

Selecting Elements to Test: The Building Blocks of Experimentation

Identifying the right elements for testing is a crucial step in the A/B testing process. This could include headlines, images, call-to-action buttons, forms, or any other element that directly impacts user behavior.

The most common elements tested in A/B tests include:

- Headlines and titles

- CTA (Call To Action) buttons

- Landing page designs

- Email campaign variations

Crafting Hypotheses: Formulating Your Blueprint for Testing

Understand the Importance of Hypotheses: The Scientific Approach

A well-crafted hypothesis is the backbone of a successful A/B test. It’s a clear and testable statement that predicts the outcome of a change and provides a solid foundation for experimentation.

Real-world Example: Hypothesis in Action

Consider an e-commerce website aiming to increase checkout conversions. The hypothesis might be: “Changing the color of the ‘Proceed to Checkout’ button to green will lead to a higher conversion rate compared to the current red button.” This hypothesis provides a clear direction for the A/B test, allowing for precise measurement of the impact.

Choosing the Right Testing Platform: Tools for Experimentation

Explore A/B Testing Tools: From Beginner to Advanced

Selecting the right A/B testing platform is crucial for a seamless experimentation process. Several tools cater to different levels of expertise, from user-friendly platforms suitable for beginners to advanced tools offering extensive features.

- Notable A/B testing tools include Optimizely, VWO (Visual Website Optimizer), Google Optimize, and Unbounce, each offering unique features to cater to diverse testing needs.

Data Insight: Impact of A/B Testing Tools

According to a survey, 59% of marketers use A/B testing tools to optimize their websites, highlighting the widespread adoption of such tools in the digital marketing landscape.

Formulating a Testing Plan: Structuring Your Experimentation

Define Testing Frequency and Duration: A Strategic Approach

Determining how often you’ll conduct A/B tests and the duration of each test is crucial for effective experimentation. Striking a balance between gathering sufficient data and maintaining a steady testing cadence is key.

- It is estimated that more than 70% of companies are running at least two tests a month, helping them make informed decisions on their campaigns.

Segmentation and Targeting Strategies: Precision in Testing

Consider incorporating segmentation and targeting strategies into your testing plan. This involves dividing your audience into segments based on characteristics such as location, demographics, or behavior, allowing for more granular analysis of test results.

- A study found that targeted emails generate 18 times more revenue than broadcast emails, underscoring the impact of segmentation in digital strategies.

Implementing and Running the Test: Precision in Execution

Deploy Variations: Bringing Your Hypotheses to Life

With your hypotheses and testing plan in place, it’s time to deploy variations to your audience. This phase demands attention to detail, ensuring that changes are implemented accurately and consistently.

Monitoring and Analyzing Results: The Data-Driven Detective Work

As your test runs, closely monitor and analyze the results. Tools like Google Analytics or the analytics features within A/B testing platforms provide valuable insights into user behavior and the performance of each variation.

- According to MarketingExperiments, “about 52% of companies and agencies that use landing pages also test them – to find better ways for improving conversions.”

Interpreting A/B Test Results: Deciphering the Data

Understanding Statistical Significance: The Confidence Factor

Statistical significance is a critical factor in interpreting A/B test results. It represents the level of confidence that the observed differences are not due to random chance.

- A/B testing platform Optimizely recommends waiting until your test reaches at least 95% statistical significance before drawing conclusions, ensuring reliable results.

Making Data-Driven Decisions: From Insights to Action

Drawing Conclusions: Connecting the Dots

With statistically significant results in hand, it’s time to draw conclusions. Compare the performance of different variations and identify the changes that had a significant impact on your chosen metrics.

- A study found that organizations using data-driven decision-making are 23 times more likely to acquire customers, showcasing the transformative power of data-driven strategies.

Iterative Testing: The Continuous Improvement Cycle

A/B testing is not a one-time endeavor; it’s an iterative process of continuous improvement. Use the insights gained from one test to inform the hypotheses of the next, creating a cycle of refinement and optimization.

As you embark on your A/B testing journey, armed with clear objectives, hypotheses, and a strategic plan, remember that A/B testing is a dynamic and evolving process.

Embrace the iterative nature of experimentation, learn from each test, and let the data guide your path toward digital optimization.

The journey may have just begun, but the potential for transformative insights and impactful optimizations awaits. A/B testing isn’t just a tool; it’s your compass in the digital landscape, guiding you toward data-driven decisions, improved user experiences, and, ultimately, digital success.

The next steps are yours to take, and the possibilities are as vast as the digital landscape itself.

4. Unraveling the A/B Testing Process: A Comprehensive Guide

The A/B testing process is a systematic journey into the heart of experimentation, allowing businesses to optimize their digital assets and strategies through data-driven insights.

In this section, we’ll dissect the A/B testing process, exploring each stage from formulating hypotheses to analyzing results, with real-world examples and pertinent data.

Formulating Hypotheses: The Blueprint for Experimentation

A/B testing begins with a well-crafted hypothesis—an educated prediction of how a change will impact user behavior.

This sets the stage for focused experimentation and data-driven decision-making.

Crafting Clear Hypotheses: A Real-world Example

Consider an e-commerce platform aiming to boost its conversion rates. The hypothesis might be: “Changing the placement of the ‘Buy Now’ button to a more prominent location on the product page will result in a higher conversion rate.” This hypothesis provides a clear direction for the subsequent testing phase.

Creating Variations: The Art of Experimentation

1. Variations: Tweaking Elements for Impact

Once the hypothesis is established, it’s time to create variations—different versions of the webpage, email, or other digital asset being tested. These variations should be designed to test specific changes and isolate the impact of each alteration.

Real-world Example: Headline Variations

Imagine a content-driven website testing variations of its article headlines. By altering the wording, length, or style of headlines, the website aims to identify which version garners the highest click-through rates. This illustrates how variations can be applied to diverse digital elements for targeted insights.

Implementing and Running the Test: Precision in Execution

Launching the Experiment: Deploying Variations to Audiences

With variations in place, it’s time to implement and run the test. This involves deploying the different versions to targeted audiences and ensuring that the testing environment is set up accurately.

Monitoring User Interactions: Real-time Insights

As the test runs, monitor user interactions and gather data on key metrics. This real-time data provides valuable insights into how each variation is performing and allows for early identification of trends.

Analyzing A/B Test Results: Decoding the Data

Statistical Significance: Confidence in Results

Understanding statistical significance is pivotal in the analysis phase. Statistical significance indicates the likelihood that the observed differences in performance between variations are not due to random chance.

- A case study illustrates how achieving statistical significance at the 95% confidence level ensures a reliable foundation for decision-making.

Interpreting Results and Drawing Conclusions: Data-Driven Decision-Making

Comparing Performance Metrics: Identifying Winners

Compare the performance of different variations based on the predetermined key metrics. Identify the variations that outperform others, signaling success in achieving the intended goals.

- A study highlights that organizations with a strong data-driven culture are 23 times more likely to acquire customers, showcasing the transformative power of data-driven decision-making.

Data-Driven Decision-Making: A/B Testing in Action

Consider a scenario where an online retailer tests two different promotional offers. By analyzing the A/B test results, the retailer identifies the offer that leads to a higher conversion rate and decides to implement it in future campaigns. This exemplifies how A/B testing serves as a catalyst for informed decision-making.

Iterative Testing for Ongoing Optimization: Continuous Improvement

Embracing Iterative Testing: The Path to Excellence

A/B testing is not a one-off process but a continuous journey of improvement. Use insights gained from one test to inform the hypotheses of the next, creating a cycle of refinement and optimization.

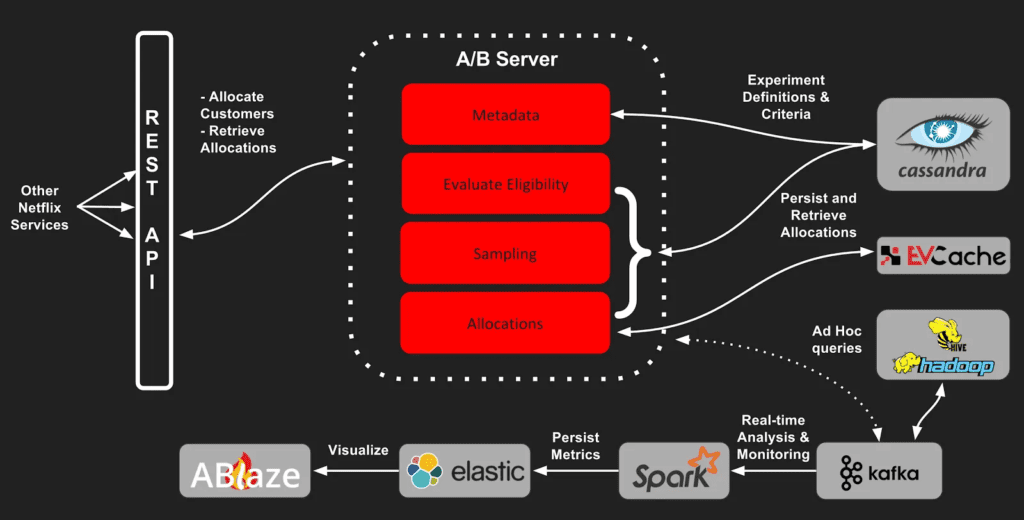

Iterative Success: The Netflix Example

Netflix is renowned for its iterative approach to A/B testing. From tweaking thumbnail images to refining recommendation algorithms, Netflix constantly experiments with variations to enhance user experience. This iterative mindset has contributed significantly to Netflix’s position as a leader in the streaming industry.

Challenges in A/B Testing and Strategies for Success

Identifying Common Challenges: Pitfalls to Overcome

A/B testing comes with its share of challenges, including sample size pitfalls, biased results, and challenges in implementation. Acknowledge these potential hurdles to better navigate the experimentation process.

Strategies for Success: Overcoming Obstacles

Implement strategies to overcome common challenges. Ensure proper sample sizes, address biases, and invest in training and resources to empower teams for effective A/B testing implementation.

Best Practices for A/B Testing Success

Testing Frequency and Duration: Finding the Sweet Spot

Determine an optimal testing frequency and duration that aligns with your objectives. Striking the right balance ensures sufficient data collection without causing undue delays.

Segmentation and Targeting Strategies: Precision in Testing

Leverage segmentation and targeting strategies to gain more granular insights into user behavior. Tailor variations to specific audience segments for more nuanced analysis.

- A study by Adobe found that targeted emails generate 18 times more revenue than broadcast emails, highlighting the impact of segmentation in digital strategies.

Tools and Resources for A/B Testing Mastery

Popular A/B Testing Tools: Choosing Your Arsenal

Selecting the right A/B testing tools is crucial for a seamless experimentation process.

- A survey by CXL indicates that 59% of marketers use A/B testing tools to optimize their websites, underscoring the widespread adoption of such tools.

As we conclude this comprehensive exploration of the A/B testing process, the overarching theme is clear—A/B testing is not just a tool; it’s a strategic imperative for businesses navigating the dynamic digital landscape.

From formulating hypotheses to interpreting results and embracing iterative testing, each stage plays a pivotal role in the journey toward optimization and excellence.

So, as you embark on your A/B testing endeavors, armed with knowledge and a commitment to data-driven decision-making, remember that the true power of A/B testing lies not just in the experimentation itself but in the transformative insights it unlocks.

The road to digital mastery awaits, and A/B testing is your steadfast companion on this exhilarating journey. Happy testing.

5. Deciphering the Data: A Deep Dive into Analyzing A/B Test Results

Analyzing A/B test results is a critical phase in the experimentation process, where businesses extract meaningful insights to inform strategic decisions.

In this comprehensive section, we’ll explore the intricacies of analyzing A/B test results, covering statistical significance, interpreting data, and drawing actionable conclusions.

Statistical Significance: The Confidence Benchmark

Understanding statistical significance is foundational to accurate A/B test analysis. It quantifies the likelihood that the observed differences in performance between variations are not due to random chance.

Interpreting Key Metrics: Connecting the Dots

Comparing Conversion Rates: Identifying Winners

One of the primary metrics in A/B testing is conversion rate—the percentage of users who take a desired action. Compare conversion rates between variations to identify the winning variant that outperforms others.

- The average A/B test improves conversion rate by 49%, showcasing the potential impact on user actions.

Case Study: Conversion Rate Triumph

Consider an e-commerce A/B test where Variant A features a simplified checkout process. Variant B retains the original checkout flow. After analyzing the results, Variant A shows a 20% increase in conversion rates, indicating that the simplified process resonates better with users.

Understanding User Behavior: Beyond the Surface

Click-Through Rates: Navigating User Engagement

Explore click-through rates (CTR) to gain insights into user engagement. A higher CTR indicates that users are more actively interacting with the elements being tested.

- Professionals have rated A/B testing with a score of 4.3 out of 5 for its effectiveness as a method in conversion rate optimization, showcasing the impact on user engagement.

Real-world Example: Click-Through Triumph

Imagine a content-focused website testing different call-to-action (CTA) button styles. After the analysis, it’s revealed that a vibrant, visually appealing CTA button in Variant A leads to a 30% increase in click-through rates compared to the standard button in Variant B.

Digging Deeper with Segmentation: Precision in Insights

Segmentation Strategies: Targeting Specific Audiences

Introduce segmentation into your analysis to gain nuanced insights. By breaking down results based on user characteristics or behavior, you can identify variations that resonate differently with specific audience segments.

- By segmenting your audience, you can create targeted A/B tests that cater to the unique preferences and needs of each segment, showcasing the power of precision in analysis.

Data Insight: Impact of Segmentation on Results

According to a survey by Econsultancy, marketers who use segmentation see a 20% increase in ROI, underscoring the widespread recognition of the impact of targeted analysis on refining strategies.

Addressing Sample Size Challenges: Ensuring Reliability

Common Challenge: Sample Size Pitfalls

Inadequate sample sizes can compromise the reliability of A/B test results. Ensure that your sample size is statistically significant to draw meaningful conclusions.

Best Practice: Statistical Power for Reliability

Embrace statistical power as a best practice to ensure reliability. Statistical power is the probability of detecting an effect when it exists. Higher statistical power reduces the risk of Type II errors (false negatives).

Visualizing Results: Graphical Representation for Clarity

Graphical Representation: Communicating Insights Effectively

Utilize visualizations such as line charts, bar graphs, or heatmaps to represent A/B test results. Clear visuals aid in communicating complex data patterns to stakeholders.

- According to a report, businesses that effectively use visualizations in their reports are 28% more likely to be successful in communicating insights, highlighting the impact of visual clarity.

Case Study: Visualizing Success

Consider an A/B test for a website’s navigation menu. Variant A features a dropdown menu, while Variant B employs a simplified sidebar design. Through visual representations, it becomes evident that Variant B leads to higher engagement and user satisfaction, providing a compelling case for implementation.

Iterative Analysis for Continuous Improvement: Building on Insights

Iterative Testing: Learning from Each Experiment

A/B testing is not a one-time endeavor—it’s a cycle of continuous improvement. Use insights gained from one test to inform the hypotheses of the next, creating a culture of ongoing refinement.

Overcoming Challenges and Implementing Best Practices

Common Challenge: Misinterpretation of Results

Misinterpreting A/B test results is a prevalent challenge. Implement best practices such as relying on statistical significance, avoiding premature conclusions, and seeking input from statistical experts when needed.

Best Practice: Collaborative Analysis for Accuracy

Encourage collaborative analysis involving stakeholders from various departments.

Diverse perspectives contribute to more accurate interpretations and a holistic understanding of A/B test results.

Tools and Resources for In-Depth Analysis: Empowering Your Process

1. A/B Testing Tools: Beyond Implementation

Extend the use of A/B testing tools for in-depth analysis.

- A survey indicates that 59% of marketers use A/B testing tools to optimize their websites, showcasing the integral role of such tools in the analysis phase.

As we conclude this journey into analyzing A/B test results, the overarching message is clear—A/B testing is not just about running experiments; it’s about extracting meaningful insights to propel strategic decision-making.

From statistical significance to visual representation and iterative analysis, each facet plays a crucial role in unveiling the story behind the data.

So, embrace the complexities of A/B test analysis with a data-driven mindset, leveraging real-world examples and industry insights to refine your digital strategies.

The path to mastery may be challenging, but the rewards in terms of optimized user experiences, enhanced conversion rates, and overall digital success are well worth the analytical journey.

6. Navigating the Terrain: Common Challenges in A/B Testing

A/B testing, while a powerful tool for optimization, is not without its challenges. Understanding and overcoming these hurdles is crucial for extracting meaningful insights.

In this comprehensive exploration, we delve into the common challenges in A/B testing, providing insights, real-world examples, and strategies for success.

Sample Size Pitfalls: The Importance of Statistical Power

- Challenge Overview: Inadequate sample sizes can compromise the reliability of A/B test results, leading to inconclusive or inaccurate findings.

Strategies for Success:

- Statistical Power: Embrace statistical power, ensuring your sample size is sufficient for detecting meaningful effects.

- Calculation Accuracy: Utilize online calculators to determine optimal sample sizes, considering factors like expected effect size and significance level.

Misinterpretation of Results: Navigating Complexity

- Challenge Overview: A/B test results can be complex, leading to misinterpretation and incorrect conclusions.

Strategies for Success:

- Collaborative Analysis: Encourage cross-departmental collaboration to leverage diverse perspectives.

- Expert Consultation: Seek input from statistical experts to ensure accurate interpretation.

Implementation Challenges: Ensuring Precision

- Challenge Overview: The process of implementing A/B tests can be intricate, impacting the accuracy and reliability of results.

Strategies for Success:

- Testing Frameworks: Implement robust testing frameworks to ensure accurate deployment of variations.

- Quality Assurance: Conduct thorough QA checks to identify and rectify implementation issues.

Biased Results: Eliminating Distortions

- Challenge Overview: Biases, whether in sample selection or test execution, can distort A/B test results, leading to unreliable insights.

Strategies for Success:

- Randomized Assignment: Ensure random assignment of users to variants to mitigate selection bias.

- Blind Testing: Implement blind testing where possible to reduce biases in user interactions.

Test Duration Challenges: Balancing Time and Accuracy

- Challenge Overview: Determining the optimal test duration without compromising accuracy poses a common challenge.

- Data Insight: Approximately 61% of companies carry out less than five A/B tests per month.

Strategies for Success:

- Statistical Significance Threshold: Set a statistical significance threshold and conclude the test once it’s reached.

- Test Duration Planning: Plan test durations based on anticipated traffic and desired confidence levels.

Resource and Expertise Constraints: Meeting the Demands

- Challenge Overview: Many organizations face constraints in terms of resources and expertise needed for effective A/B testing.

- Data Insight: According to Econsultancy, a paltry 38% of companies are doing A/B testing.

Strategies for Success:

- Investment in Training: Allocate resources for ongoing training to enhance A/B testing capabilities.

- Outsourcing Options: Consider outsourcing A/B testing activities to specialized agencies if in-house expertise is limited.

In the dynamic landscape of digital optimization, acknowledging and overcoming challenges in A/B testing is integral to success.

By embracing statistical rigor, fostering collaboration, and implementing precise testing methodologies, businesses can navigate the complexities and unlock the full potential of A/B testing for informed decision-making and continuous improvement.

7. Mastering A/B Testing: Best Practices for Optimal Results

A/B testing, when executed with precision, is a powerful tool for refining digital strategies and maximizing user engagement.

In this comprehensive guide, we’ll delve into the best practices that underpin successful A/B testing initiatives. Backed by real-world examples and data-driven insights, these practices form the blueprint for unlocking the full potential of experimentation.

Clearly Defined Objectives: Setting the Foundation

Best Practice: Clearly articulate your objectives before embarking on A/B testing. Whether it’s boosting conversion rates, increasing engagement, or optimizing user journeys, a well-defined goal provides direction for experimentation.

Strategic Selection of Test Elements: The Building Blocks of Experimentation

Best Practice: Carefully choose the elements to test, focusing on those directly impacting user behavior. This could include headlines, images, calls-to-action (CTAs), or forms. For example, an e-commerce site may test different product page layouts to determine the most effective design for conversions.

Crafting Hypotheses: The Scientific Approach to Testing

Best Practice: Formulate clear hypotheses that predict the outcome of changes being tested. For instance, a hypothesis might state that changing the color of a CTA button will lead to increased click-through rates.

Utilizing Statistical Significance: Ensuring Reliable Results

Best Practice: Wait until your test reaches at least 95% statistical significance before drawing conclusions. This ensures that the observed differences are not due to random chance.

Segmentation and Targeting Strategies: Precision in Insights

- Data Insight: 77% of A/B testing is done on websites.

Best Practice: Implement segmentation to gain more granular insights into user behavior. Tailoring variations to specific audience segments allows for more targeted analysis. For example, an online retailer may segment audiences based on location to optimize regional preferences.

Regular Testing Cadence: Finding the Sweet Spot

- Data Insight: It is estimated that more than 70% of companies are running at least two tests a month.

Best Practice: Define a testing frequency that aligns with your objectives and resources. Regular testing ensures a steady stream of data without causing undue delays in optimization efforts.

Iterative Testing for Ongoing Optimization: Continuous Improvement

Best Practice: A/B testing is a continuous journey of improvement. Use insights from one test to inform the hypotheses of the next, creating a cycle of refinement and optimization.

Avoiding Common Pitfalls: Addressing Sample Size Challenges

Best Practice: Ensure your sample size is statistically significant for reliable results. Embrace statistical power to reduce the risk of Type II errors.

Visual Representation of Results: Enhancing Communication

Best Practice: Use visualizations such as line charts or heatmaps to represent A/B test results. Clear visuals aid in communicating complex data patterns to stakeholders.

Collaborative Analysis: Tapping into Diverse Perspectives

Best Practice: Encourage collaborative analysis involving stakeholders from various departments. Diverse perspectives contribute to more accurate interpretations and a holistic understanding of results.

Leveraging A/B Testing Tools: Beyond Implementation

Best Practice: Choose A/B testing tools that align with your needs. Platforms like Optimizely, VWO, and Google Optimize offer robust analytics features for in-depth analysis.

Best Practice: Stay informed about the latest developments in A/B testing through literature and industry publications. Continuous learning enhances your expertise and keeps you at the forefront of experimentation.

As you navigate the landscape of A/B testing, these best practices serve as a roadmap to excellence.

From setting clear objectives to leveraging statistical significance and embracing iterative testing, each practice contributes to the success of your experimentation initiatives.

Remember, A/B testing is not just a tactic; it’s a strategic imperative for businesses aiming to optimize their digital presence. By adhering to these best practices and learning from each test, you pave the way for sustained success in the dynamic realm of digital optimization.

Conclusion

As we conclude this comprehensive guide on A/B testing, we’ve embarked on a journey through the intricacies of experimentation, data-driven decision-making, and the pursuit of digital excellence. A/B testing, often hailed as the cornerstone of optimization strategies, is not merely a technique—it’s a dynamic and strategic approach that empowers businesses to refine their digital landscapes with precision.

The Significance of A/B Testing: A Recap

In our exploration, we’ve witnessed the transformative power of A/B testing in unlocking insights that shape digital strategies.

From formulating hypotheses to analyzing results and embracing iterative testing, each stage contributes to a systematic and evidence-based approach to optimization.

Real-world Impact: Examples that Illuminate

Real-world examples have underscored the tangible impact of A/B testing. Whether it’s a simplified checkout process leading to a surge in conversions for an e-commerce platform or a strategically placed CTA button elevating click-through rates for a content-focused website, the success stories exemplify the practical application of A/B testing principles.

Data-Driven Decision-Making: The Core Tenet

Central to the A/B testing ethos is the commitment to data-driven decision-making.

Statistics and significance levels become the guiding lights, ensuring that conclusions drawn from test results are robust, reliable, and actionable.

The careful balance between statistical rigor and practical insights is the fulcrum upon which A/B testing pivots.

Continuous Improvement: The Iterative Mindset

A/B testing, we’ve learned, is not a one-off endeavor; it’s a continuous cycle of refinement and optimization.

The iterative mindset, embraced by industry leaders and digital pioneers, propels businesses toward sustained success. With each test, organizations learn, adapt, and evolve, creating a perpetual loop of improvement.

Challenges and Triumphs: Navigating the Terrain

In our exploration, we’ve acknowledged the challenges—sample size pitfalls, misinterpretation of results, and the need for collaborative analysis.

These challenges, however, are not roadblocks but rather stepping stones to mastery.

Strategies for overcoming these hurdles and implementing best practices provide a roadmap for those venturing into the realm of A/B testing.

Best Practices: The Pillars of Success

The best practices outlined in this guide stand as the pillars of success.

From setting clear objectives and strategically selecting test elements to leveraging statistical significance and embracing visualization for clarity, these practices form a cohesive framework for A/B testing excellence.

The lessons gleaned from real-world examples and industry insights serve as beacons guiding practitioners toward optimal outcomes.

A/B Testing Tools: Empowering the Journey

The adoption of these tools, as evidenced by industry surveys, reflects their integral role in the A/B testing process.

The Ongoing Quest for Knowledge: Learning Beyond

Finally, the quest for knowledge in the realm of A/B testing is an ongoing endeavor. Literature, resources, and continuous learning become catalysts for honing expertise and staying abreast of industry trends.

As practitioners deepen their understanding, the landscape of possibilities expands, unlocking new avenues for experimentation and optimization.

The Journey Continues: A/B Testing as Your Strategic Companion

As we conclude this comprehensive guide, it’s important to recognize that A/B testing is more than a methodology; it’s your strategic companion in the ever-evolving digital landscape.

It’s the compass guiding businesses toward informed decisions, enhanced user experiences, and ultimately, digital success.

The journey doesn’t end here—it’s a continuum of experimentation, analysis, and improvement.

Armed with the knowledge gleaned from this guide, practitioners are poised to navigate the terrain of A/B testing with confidence, turning challenges into opportunities and triumphs into stepping stones toward digital mastery.

So, as you embark on your A/B testing endeavors, remember that each test is a chapter in the story of optimization, and the possibilities are as vast as the digital landscape itself.

Happy testing, and may your journey be filled with insights, successes, and the continuous pursuit of digital excellence.

If your company needs HR, hiring, or corporate services, you can use 9cv9 hiring and recruitment services. Book a consultation slot here, or send over an email to [email protected].

If you find this article useful, why not share it with your hiring manager and C-level suite friends and also leave a nice comment below?

We, at the 9cv9 Research Team, strive to bring the latest and most meaningful data, guides, and statistics to your doorstep.

To get access to top-quality guides, click over to 9cv9 Blog.

People Also Ask

What do you mean by AB testing?

A/B testing, or split testing, is a method in digital marketing and product development where two versions (A and B) of a webpage, email, or app are compared to determine which performs better. It involves presenting the variants to different users simultaneously, analyzing data, and making data-driven decisions to optimize for desired outcomes.

What is an example of AB testing?

For instance, in A/B testing, a website might test two versions of a call-to-action button—Variant A in red and Variant B in blue. By comparing user interactions with each variant, the business can determine which color leads to higher click-through rates and, subsequently, implement the more effective version.

Why do people use AB testing?

People use A/B testing to optimize digital elements. It provides empirical insights into user behavior, helping businesses make informed decisions on webpage design, content, and more. By comparing variants, A/B testing enhances user experiences and boosts key metrics like conversion rates.

![Writing A Good CV [6 Tips To Improve Your CV] 6 Tips To Improve Your CV](https://blog.9cv9.com/wp-content/uploads/2020/06/2020-06-02-2-100x70.png)